原创

Java操作Kafka

温馨提示:

本文最后更新于 2019年03月21日,已超过 2,621 天没有更新。若文章内的图片失效(无法正常加载),请留言反馈或直接联系我。

pom依赖:

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>0.11.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.12</artifactId>

<version>0.11.0.0</version>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.17</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams</artifactId>

<version>0.11.0.2</version>

</dependency>

创建生产者

Java代码:

package com.lzhpo.kafka.createProducer;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerRecord;

import java.util.Properties;

/**

* <p> Author:lzhpo </p>

* <p> Title:</p>

* <p> Description:

* 创建生产者(新API)

* </p>

*/

public class NewProducer {

public static void main(String[] args) {

Properties properties = new Properties();

// Kafka服务端的主机名和端口号

properties.put("bootstrap.servers", "192.168.200.111:9092");

// 等待所有副本节点的应答

properties.put("acks", "all");

// 消息发送最大尝试次数

properties.put("retries", 0);

// 一批消息处理大小

properties.put("batch.size", 16384);

// 请求延时

properties.put("linger.ms", 1);

// 发送缓存区内存大小

properties.put("buffer.memory",33554432);

// key序列化

properties.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

// value序列化

properties.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

KafkaProducer<String, String> producer = new KafkaProducer<>(properties);

for (int i = 0; i < 50; i++) {

producer.send(new ProducerRecord<String, String>("first", Integer.toString(i), "HelloWorld-" +i));

}

producer.close();

}

}

先在Kafka中创建一个topic:

kafka-topics.sh --zookeeper localhost:2181 --create --replication-factor 1 --partitions 1 --topic first

然后在Kafka中开一个消费者(用于查看Java发送的消息):

kafka-console-consumer.sh --zookeeper localhost:2181 --from-beginning --topic first

如果要使用带有回调函数的:

KafkaProducer<String, String> producer = new KafkaProducer<>(properties);

for (int i = 0; i < 50; i++) {

producer.send(new ProducerRecord<String, String>("first", "Hello" + i), new Callback() {

@Override

public void onCompletion(RecordMetadata recordMetadata, Exception e) {

if (recordMetadata != null){

System.out.println(recordMetadata.partition() + "---" +recordMetadata.offset());

}

}

});

}

消费者

package com.lzhpo.kafka.consumer.highAPI;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import java.util.Arrays;

import java.util.Properties;

/**

* <p> Author:lzhpo </p>

* <p> Title:</p>

* <p> Description:

* 官方提供案例(自动维护消费情况)(新API)

* </p>

*/

public class CustomNewConsumer {

public static void main(String[] args) {

Properties props = new Properties();

// 定义kakfa 服务的地址,不需要将所有broker指定上

props.put("bootstrap.servers", "192.168.200.111:9092");

// 制定consumer group

props.put("group.id", "test");

// 是否自动确认offset

props.put("enable.auto.commit", "true");

// 自动确认offset的时间间隔

props.put("auto.commit.interval.ms", "1000");

// key的序列化类

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

// value的序列化类

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

// 定义consumer

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props);

// 消费者订阅的topic, 可同时订阅多个

consumer.subscribe(Arrays.asList("first", "second","third"));

while (true) {

// 读取数据,读取超时时间为100ms

ConsumerRecords<String, String> records = consumer.poll(100);

for (ConsumerRecord<String, String> record : records)

System.out.printf("offset = %d, key = %s, value = %s%n", record.offset(), record.key(), record.value());

}

}

}

在Kafka中开启一个生产者控制台:

kafka-console-producer.sh --broker-list localhost:9092 --topic first

然后运行Java代码,在开启的Kafka控制台中发送消息,然后在idea中查看发送的消息。

自定义分区

package com.lzhpo.kafka.custompartitionProducer;

import org.apache.kafka.clients.producer.Partitioner;

import org.apache.kafka.common.Cluster;

import java.util.Map;

/**

* <p> Author:lzhpo </p>

* <p> Title:</p>

* <p> Description:

* 自定义分区(新API)

* </p>

*/

public class CustomPartitioner implements Partitioner {

@Override

public int partition(String s, Object o, byte[] bytes, Object o1, byte[] bytes1, Cluster cluster) {

//控制分区

return 0;

}

@Override

public void close() {

}

@Override

public void configure(Map<String, ?> map) {

}

}

package com.lzhpo.kafka.custompartitionProducer;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

import java.util.Properties;

/**

* <p> Author:lzhpo </p>

* <p> Title:</p>

* <p> Description:

* 在Kafka中(logs日志包下的first-0里面的)查看:tail -f 00000000000000000000.log分区变化情况

*

* </p>

*/

public class App {

public static void main(String[] args) {

Properties props = new Properties();

// Kafka服务端的主机名和端口号

props.put("bootstrap.servers", "192.168.200.111:9092");

// 等待所有副本节点的应答

props.put("acks", "all");

// 消息发送最大尝试次数

props.put("retries", 0);

// 一批消息处理大小

props.put("batch.size", 16384);

// 增加服务端请求延时

props.put("linger.ms", 1);

// 发送缓存区内存大小

props.put("buffer.memory", 33554432);

// key序列化

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

// value序列化

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

// 自定义分区(com.lzhpo.kafka.custompartitionProducer.CustomPartitioner)

props.put("partitioner.class", "com.lzhpo.kafka.custompartitionProducer.CustomPartitioner");

Producer<String, String> producer = new KafkaProducer<>(props);

producer.send(new ProducerRecord<String, String>("first", "1", "lzhpo"));

producer.close();

}

}

在Kafka中(logs日志包下的first-0里面的)查看:tail -f 00000000000000000000.log分区变化情况。

自定义拦截器

package com.lzhpo.kafka.interceptor;

import org.apache.kafka.clients.producer.ProducerInterceptor;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.clients.producer.RecordMetadata;

import java.util.Map;

/**

* <p> Author:lzhpo </p>

* <p> Title:</p>

* <p> Description:</p>

*/

public class TimeInterceptor implements ProducerInterceptor<String, String> {

@Override

public void configure(Map<String, ?> configs) {

}

@Override

public ProducerRecord<String, String> onSend(ProducerRecord<String, String> record) {

// 创建一个新的record,把时间戳写入消息体的最前部

return new ProducerRecord(record.topic(), record.partition(), record.timestamp(), record.key(),

System.currentTimeMillis() + "," + record.value().toString());

}

@Override

public void onAcknowledgement(RecordMetadata metadata, Exception exception) {

}

@Override

public void close() {

}

}

package com.lzhpo.kafka.interceptor;

import org.apache.kafka.clients.producer.ProducerInterceptor;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.clients.producer.RecordMetadata;

import java.util.Map;

/**

* <p> Author:lzhpo </p>

* <p> Title:</p>

* <p> Description:</p>

*/

public class CounterInterceptor implements ProducerInterceptor<String, String> {

private int errorCounter = 0;

private int successCounter = 0;

@Override

public void configure(Map<String, ?> configs) {

}

@Override

public ProducerRecord<String, String> onSend(ProducerRecord<String, String> record) {

return record;

}

@Override

public void onAcknowledgement(RecordMetadata metadata, Exception exception) {

// 统计成功和失败的次数

if (exception == null) {

successCounter++;

} else {

errorCounter++;

}

}

@Override

public void close() {

// 保存结果

System.out.println("Successful sent: " + successCounter);

System.out.println("Failed sent: " + errorCounter);

}

}

package com.lzhpo.kafka.interceptor;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import java.util.ArrayList;

import java.util.List;

import java.util.Properties;

/**

* <p> Author:lzhpo </p>

* <p> Title:</p>

* <p> Description:

* 实现一个简单的双interceptor组成的拦截链。第一个interceptor会在消息发送前将时间戳信息加到消息value的最前部;第二个interceptor会在消息发送后更新成功发送消息数或失败发送消息数。

* </p>

*/

public class App {

public static void main(String[] args) throws Exception {

// 1 设置配置信息

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.200.111:9092");

props.put("acks", "all");

props.put("retries", 0);

props.put("batch.size", 16384);

props.put("linger.ms", 1);

props.put("buffer.memory", 33554432);

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

// 2 构建拦截链

List<String> interceptors = new ArrayList<>();

interceptors.add("com.lzhpo.kafka.timeinterceptor.TimeInterceptor"); interceptors.add("com.lzhpo.kafka.timeinterceptor.CounterInterceptor");

props.put(ProducerConfig.INTERCEPTOR_CLASSES_CONFIG, interceptors);

String topic = "first";

Producer<String, String> producer = new KafkaProducer<>(props);

// 3 发送消息

for (int i = 0; i < 10; i++) {

ProducerRecord<String, String> record = new ProducerRecord<>(topic, "message" + i);

producer.send(record);

}

// 4 一定要关闭producer,这样才会调用interceptor的close方法

producer.close();

}

}

先在Kafka中开启一个消费者:

kafka-console-consumer.sh --zookeeper localhost:2181 --from-beginning --topic first

Java控制台:

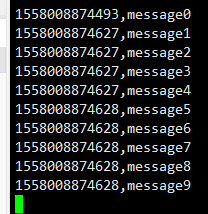

Kafka中的消费者:

- 本文标签: Kafka

- 本文链接: http://www.lzhpo.com/article/13

- 版权声明: 本文由lzhpo原创发布,转载请遵循《署名-非商业性使用-相同方式共享 4.0 国际 (CC BY-NC-SA 4.0)》许可协议授权